|

Previous research have proved the competency of AI models over human, such like the Deep Blue beating human chess players. In this project, we designed and developed a Gomoku tutor powered by AlphaZero. Website: |

Eyes darting or maintaining a steady gaze straight ahead. Heartbeat racing, or maintaining a slow, even rhythm. If we encounter these phenomena in another, how do we respond – not just effectively, but physiologically? Eye movements and heartbeats are among the most intuitively meaningful physiological characteristics that humans observe in one another. Without necessarily consciously realizing it, we often respond empathetically. |

The Center for the Development and Application of Internet of Things Technologies (CDAIT), and cosponsors School of Public Policy, and the GT VentureLab, are pleased to announce the 2023 Student IoT Innovation Capacity Building Challenge call for project proposals. |

Leveraging our internal assets and ability to collect and process 3D data we have produced a wide variety of physical representations  |

|

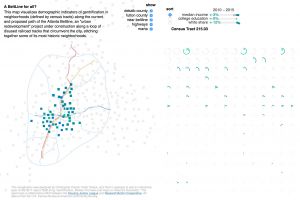

This map visualizes demographic indicators of gentrification in neighborhoods (defined by census tracts) along the current and proposed path of the Atlanta Beltline, an "urban redevelopment" project under construction along a loop of disused railroad tracks that circumvent the city, stitching together some of its most historic neighborhoods.  |

In this abstract, we present an ongoing study to compare high school students from underrepresented groups learning about complex adaptive systems with physical computing and with a screen-based simulation environment. |

The T+ID Lab of Georgia Tech, in a joint project with public health researchers at Emory University and Morehouse School of Medicine, is developing a system to enhance trust and enthusiasm for at-home COVID testing. |

How can living materials be combined with digital technologies? This project draws from scholarship in ecofeminism and consists of living wildflowers growing inside a plexiglass box.he memorial may seem closed and bounded, but is, in fact, an open system, necessitating porosity and exchange with the outside and care from humans in order to thrive.  |

|

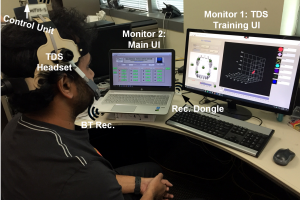

Assistive technologies (ATs) play a crucial role in the lives of individuals with severe disabilities by enabling them to have greater autonomy in performing daily tasks. The Tongue Drive System (TDS) developed at the Georgia Tech Bionics Lab is such an AT, empowering people with severe Spinal Cord Injury (SCI) to be more independent. Earlier versions of the TDS have offered tongue motion and speech as means of driving mouse activity and keyboard input.  |

MS-HCI project focused on testing a wearable computing interface prototype for usability as assistive technology and social acceptability for general and assistive-specific use in social settings |

Improving the well-being of people with mental illness requires not only clinical treatment but also social support. This research examines how major life transitions around mental illnesses are exhibited on social media and how social and clinical care intersect around these transitionary periods. |

Using a list of common approved and regulated psychiatric drugs and a Twitter dataset of 300M posts from 30K individuals, we develop machine learning models to assess effects relating to mood, cognition, depression, anxiety, psychosis, and suicidal ideation. We observe that usage of specific drugs are associated with characteristic changes in an individual’s psychopathology. We situate these observations in the psychiatry literature, with a deeper analysis of pre-treatment cues that predict treatment outcomes, and post-treatment linguistic markers correlated with positive outcomes. |

|

Users go to social network sites or online forums to get advice from members of their networks. Individuals with autism adopt and use such computer-mediated communication technology differently from typical users. They require advice about everyday situations ranging from very simple operations to complex social activities. We propose to develop a Q&A system with a robust network of people whom the user is not likely to know but who nonetheless may be willing to provide advice on everyday situations.  |

This study explores the value of novel interfaces to support historic tours and reenactment practices at Atlanta's oldest public burial grounds, Oakland Cemetery, by exploring themes of Victorian death culture, African American traditions, and change.  |

As part of the Jill Watson Suite of Tools for Education, Agent Smith enable anyone to build their own agents.  |